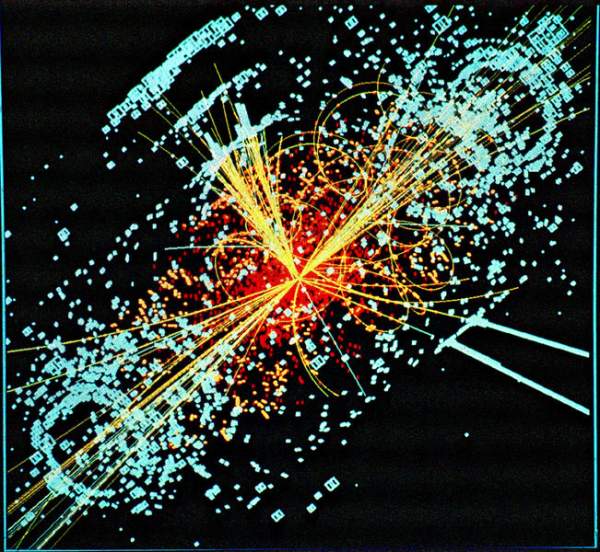

The Large Hadron Collider (LHC) is a particle accelerator that aims to revolutionize our understanding of our universe. The Worldwide LHC Computing Grid (LCG) project provides data storage and analysis infrastructure for the entire high energy physics community that will use the LHC.

The LCG, which was launched in 2003, aims to integrate thousands of computers in hundreds of data centers worldwide into a global computing resource to store and analyze the huge amounts of data that the LHC will collect. The LHC is estimated to produce roughly 15 petabytes (15 million gigabytes) of data annually. This is the equivalent of filling more than 1.7 million dual-layer DVDs a year! Thousands of scientists around the world want to access and analyze this data, so CERN is collaborating with institutions in 33 different countries to operate the LCG.

More of this article on: http://www.infoq.com/articles/lhc-grid

Exoume cluster kai sto ntua!!!!!

Google: GR-03-HEPNTUA !

CU !!

That’s great news! I wish I had known about it earlier, so I could have asked for a quote from the administrator(s) here in NTUA.

Keep on pushing the envelope guys 🙂

We can show you the infrastracture anytime 🙂